About a month ago a company (

reviewclub) gave me the opportunity to test the SumUp 3G+ payment kit. Reviewclub allows people to review a product for 4 weeks in exchange for giving your honest opinion about a product after a few weeks of use and placing that opinion on the website of an online retailer.

At the end of the test the user is often given the opportunity to purchase the product at a discount, or send it back using a prepaid shipping label. I have purchased this device, and since it was now my property I was very curious to know what cellular operator was being used in these devices. So within 24 hours after purchasing this device I've rendered it useless with my curiosity and I'd like to prevent others from doing the same. Oh, and for the curious ones: the SIM card used in my device was from Soracom.

Initial impressions

The

SumUp 3G is a pretty nifty device for people who don't process many payments and don't have a lot of requirements in terms of printing detailed receipts (with individual products on it) quickly. The purchase price is relatively low, and there are no monthly costs. Despite the low monthly cost they do include a sim card with a data plan, which allows the user to receive payments in any location where at least any of WiFi/4G/Bluetooth (via phone) connectivity are available.

The device had it's quirks (the payment terminal didn't always snugly fit in the printer dock, charging didn't always work, sometimes if a few apple pay payments were done in a row the device would say "insert card" which obviously is impossible if the card is a phone). But given the target audience I'd be able to forgive this since the barrier to get started with payment is pretty low. However there is one HUGE issue:

User hostile design

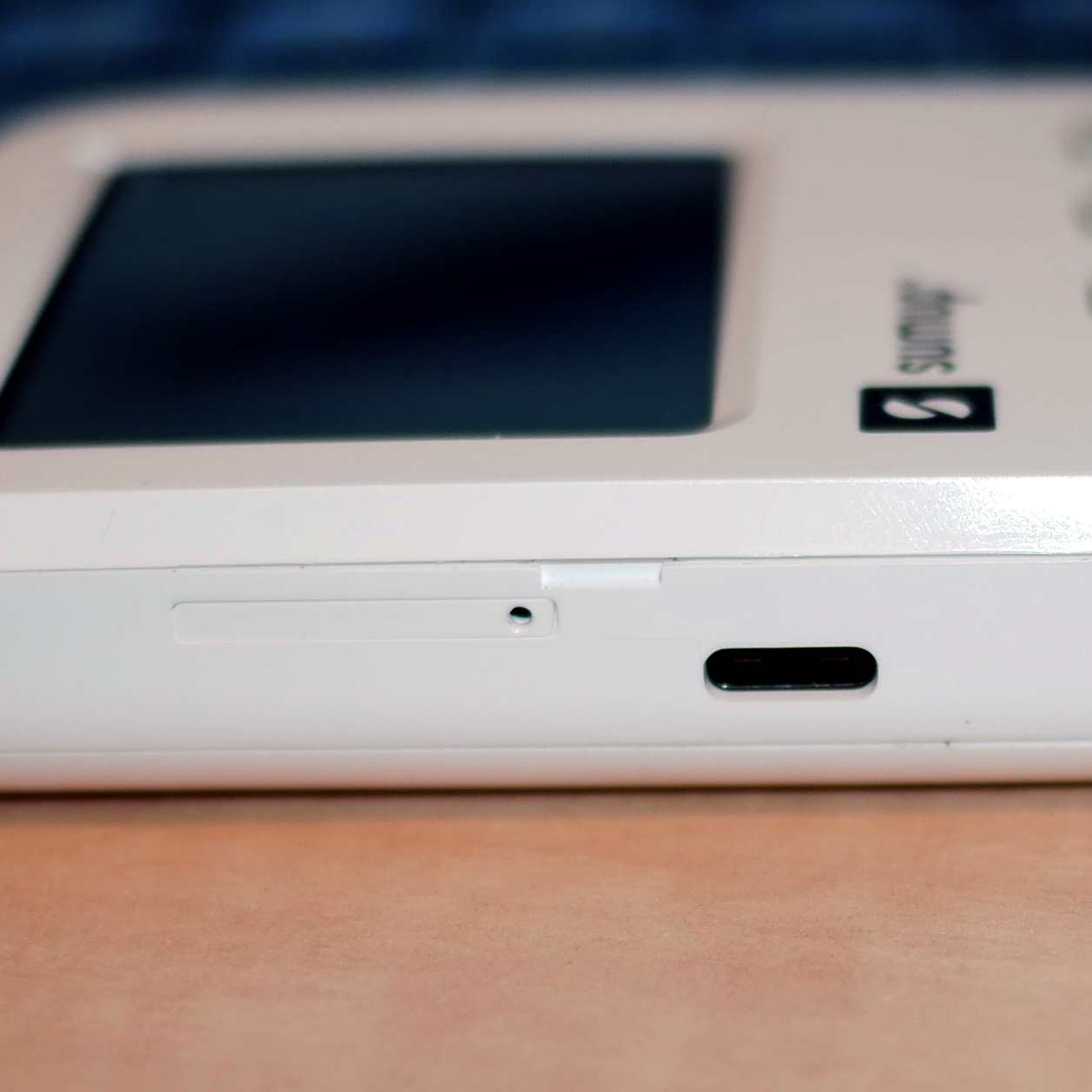

|

| The forbidden hole |

In my opinion this device is booby-trapped. It is built in such a way that there is a user-facing slot that looks very similar to the slot we know from almost every smartphone produced in the past decade. It is a plastic tray with a small hole. The hole can be used to insert a paperclip so that the tray can be ejected. And indeed, the tray is a sim-card slot. However:

inserting a paperclip in the hole instantly renders your device useless.

As soon as you insert a paper clip a tamper mechanism is triggered. This mechanism supposedly erases some battery-backed secure storage or burns fuses. Either way the device will not boot and SumUp will void your warranty.

Having this slot in here raises the question: if the slot renders the device unusable, why is it even there? Because if the slot would be populated by an employee before the battery is connected for the first time, the back cover is off. So inserting a sim in an internal slot/motherboard slot would be perfectly feasible in that case.

And even if there would be a valid reason (for example: the anti tamper mechanism is only armed after the device is powered up. The device is assembled in a single location. Local distributors put in the sim cards and seal up the packaging of the product) I can't help but wonder why there isn't a sticker over the sim slot (or the hole) that tells the user:

"Removing the sim tray will trigger the tamper detection system in this device".

That would have been grand, SumUp. None of the booklets in the packaging seem to mention this. Some people might even think this is a "factory reset" hole. And press it because their device is malfunctioning or they want to sell it to someone else.

Other people on the internet have reported that not charging the device for an extended period of time (like: 6 months?) may trigger one of the tamper detection mechanisms, as well as shocks (due to accidentally dropping the device for example). In all of these cases you're SOL. SumUp has no interest in refurbishing/repurposing these devices and they will not give you a discount for sending it back.

Repurposing the hardware is pretty difficult since most of the parts that can be easily disassembled have no data sheets or mentions at all on the internet.

Disassembly and teardown

Since I think I got my point across I guess the only thing left to do is share some teardown pictures!

To disassemble your SumUp 3G payment terminal start by unscrewing the two screws on the back of the device. Then slide the back cover downwards. You don't have to apply a lot of force to do this. I broke one of the legs because I was pulling on the cover.

Modem and battery

|

The Quectel modem with

some redacted unique numbers. |

You will be greeted by a cellular modem, in my case a

Quectel EG91-EX. This is an LTE (4G) modem with a USB 2.0 interface. The modem in question isn't very special, it is able to do 10mbps down and 5mbps up on LTE and it's an LTE CAT 1 IoT module. The flex cable connects the modem to the mother board and also carries the sim card signals (since the sim card slot is on the mother board).

Interestingly this device can do way higher speeds (according to the product brochure) when it's used on a DC-HSPA+ network (42mbps down, 5.76mbps up). If I recall correctly this is what we used to call 3.5G.

Next up is the battery, which can be easily removed. Just unclip the connector from the motherboard. The battery is an Amperex Technology PS-GB-304583-010H. It's a 1200mHh (4.44Wh) Lithium Ion cell and it's pretty thin!

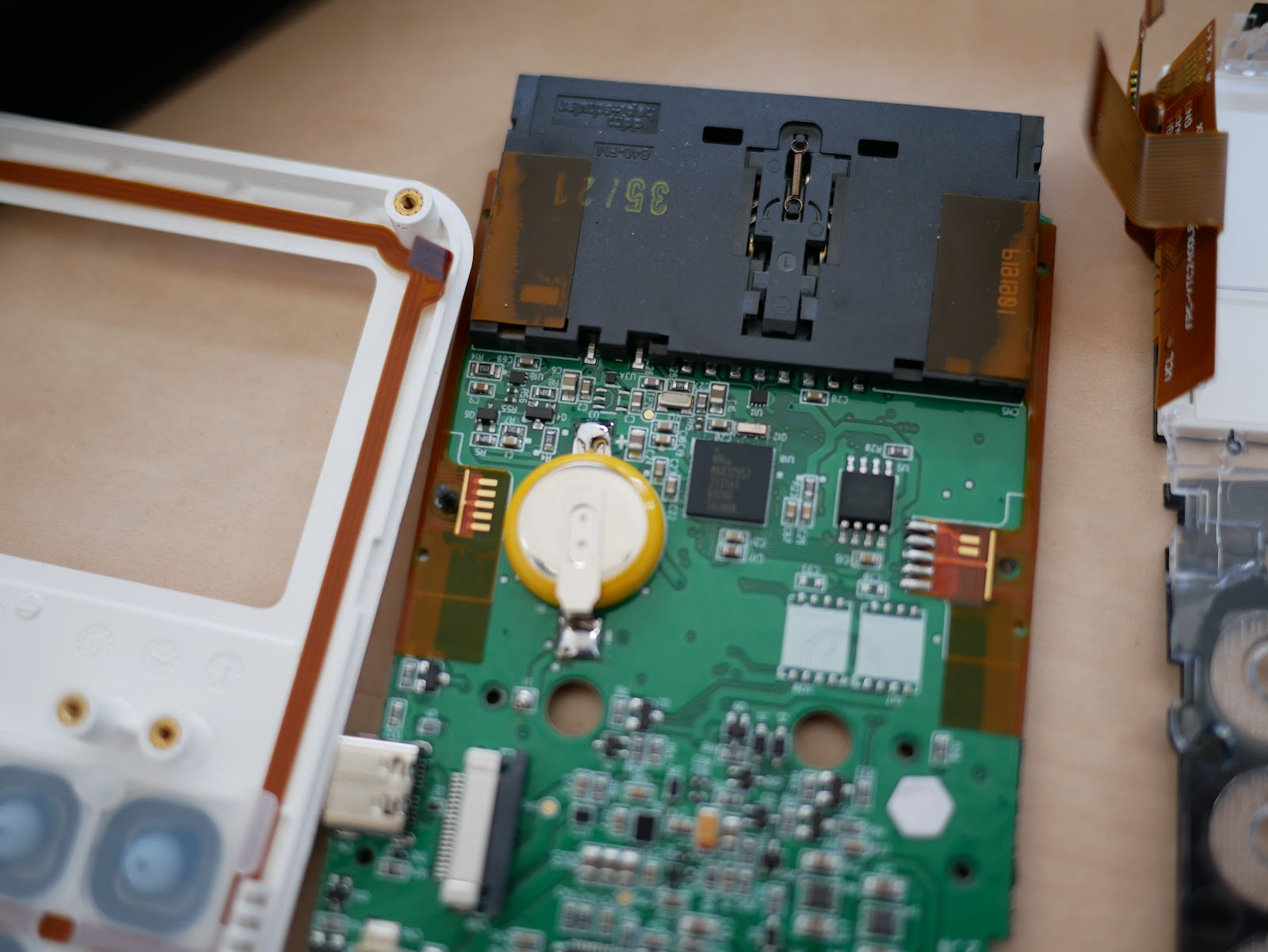

Motherboard back side

From here on I forgot to take a few pictures, but since the device is useless at this point anyway I don't think anyone really cares about how it was disassembled any way.

This is most of the "back" side of the motherboard (the side that you see if you lift the back cover off). One of the most interesting things I noticed is that there is an ESP-WROOM-02U for the WiFi connectivity. I wouldn't have expected to see such a ubiquitous device in a "secure design" like this. Seems like an easy attack vector in a payment system.

There also seems to be a separate bluetooth chip, a TAIYO YUDEN

EYSHCNZXZ Bluetooth 4.2 Low Energy module. These seem to be based on nRF chips by Nordic.

On the left we can see a battery (cell with the yellow insulation) that is presumably used to keep the CPU awake so it can trigger the tamper detection if the Lithium Ion battery is fully depleted.

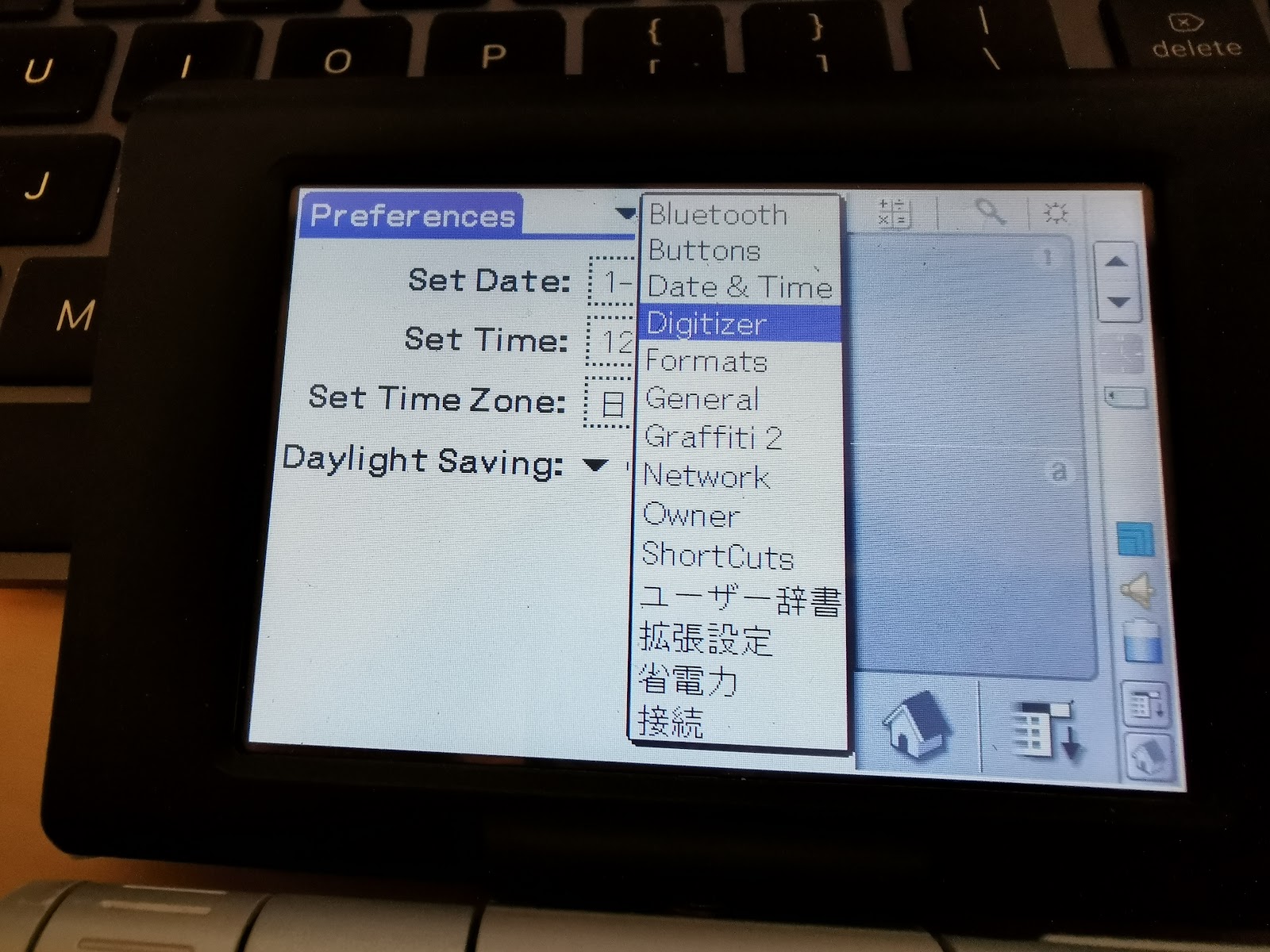

Main CPU (+ bootloader diagnostics)

The main CPU seems to be a

MAX32552 which is a "DeepCover Secure ARM Cortex-M3 Flash Microcontroller". Among the

chip features we can see:

- 6 External Tamper Sensors with Independent Random Dynamic Patterns

- 256-Bit Flip-Flop-Based Battery-Backup AES Key Storage

- Temperature and Voltage Tamper Monitor

- Secure Boot Loader with Public Key Authentication

Cool, so that probably explains the D0 to D5 on the "failure diagnostics" screen that you can get in to by pressing "3" on the "Device Blocked" screen. I remember that D1 was the Sim card holder, so it's possible that SH stands for Sim Holder, but that's just speculation.

Any "sensor" with an inverted background color has been triggered. I've managed to trigger only four out of the six. So I'm still looking for resources on how to trigger D0 (BT?) and D5 (BH?).

Furthermore we now speculate that as soon as any tamper sensor is triggered the AES key storage is cleared which prevents the device from booting at all (since it's using Secure Boot). This also explains why SumUp can't re-use the motherboard after any of the mechanisms are triggered.

Below the triggered sensors on the display we can see the date and time that the CPU was rendered useless. This is the time when the first sensor was triggered. Any additional triggers will not update the date and time.

Card reader tamper shield

There is also a tamper shield around the card reader module. It's a very interesting mesh that prevents any poking around. The foil seems to be sticked to a plastic frame and I noticed some air bubbles between the foil and the plastic frame. In addition I saw a bit of damage to the foil at the bottom part that was already present when I opened the device.

The idea with these meshes is that the traces all conduct electricity. As soon as the resistance across the mesh changes, the tamper mechanism triggers. I wonder if a big magnet would be able to trigger this mechanism as well.

Underneath the shield we can see the actual card reader. I couldn't find a model number on the black housing.

Here we can also see the gold fingers (five on the left, two on the right) that connected to the underside of the card reader tamper shield. The underside of the tamper mesh has zebra strips.

Other parts

|

The flex-board chip that probably drives

the touch screen input.

FPC-MTP240-014DAAMFIL

|

|

Part number on the flex cable that carries display

signals

YTC240DLBU-02-300C0V3 |

|

Other side of the display signal flex cable:

FPC-YTC2400DLBU-02-200N |

Conclusion

I'm a bit disappointed that SumUp doesn't make customers aware of the tamper detection mechanism and the kind of things that can trigger it. People these days have put a SIM card in their smartphone at least once in their life or they have seen people do it for them. Curiosity isn't a bad thing. And I'm completely fine with SumUp deciding "if you tamper with our device, we will void the warranty". But please, pretty please: be upfront in what kinds of actions can trigger the tamper detection mechanism.

This is a mobile payment device. Customers will have this device in their hands if they insert their payment card in the bottom and obviously every now and then a device is dropped. That's unfortunate, but nothing that a refurbishment couldn't solve.

Right now, a small business would need to have a second device on standby if they rely on card payments simply because the tamper mechanism might trigger. This second device has to be charged every few months to prevent the device from bricking itself. Imagine a church that has an annual charity sale that is run by volunteers. They decide to use a SumUp device and after the event they store it in a box for next year. Next year the device is bricked because the battery depleted over time. Why isn't the user informed about this tamper mechanism? Why do people have to find out on their own and bear the cost of purchasing another of these booby-trapped devices?

And don't just display some things that can trigger the mechanism on a FAQ page on your website. Place a sticker on the product packaging. Place it over the sim slot. Place it over the screen. I don't care.

Just make sure that people like me (who DO read thesestickers) are aware of the actions that can trigger the tamper detection sensors.

Something like this: